We’re fast approaching the midway point in 2026, and AI security (and more specifically AI Guardrails) are top of the agenda for a lot of enterprise security leaders as their end users adopt the AI app du jour.

It is becoming clear, however, that for many, native AI applications are not the scariest AI use case. There is growing awareness of the potential exposure caused by the emergence of AI capabilities that sit within existing, fully approved and managed SaaS apps. Gartner has predicted that 40% of enterprise apps will feature task-specific AI agents by 2026, up from less than 5% in 2025.

These apps are not where you would perhaps naturally consider looking for shadow AI risk, but new AI features (often launched with little more fanfare than a pop-up message for users) pose a significant governance and data security risk.

We’re seeing this in the wild, with security teams forced to reckon with questions like:

- Do I know if <insert SaaS app> provides AI functionalities?

- Do I know if <insert SaaS app> provides tenant isolation for its AI capabilities?

- Do I know if <insert SaaS app> uses my organizational data for learning?

- Do I therefore need to limit how users use these apps?

If you’re in the same boat, not to worry, Netskope has you covered.

How Netskope helps answer questions about SaaS AI security risk

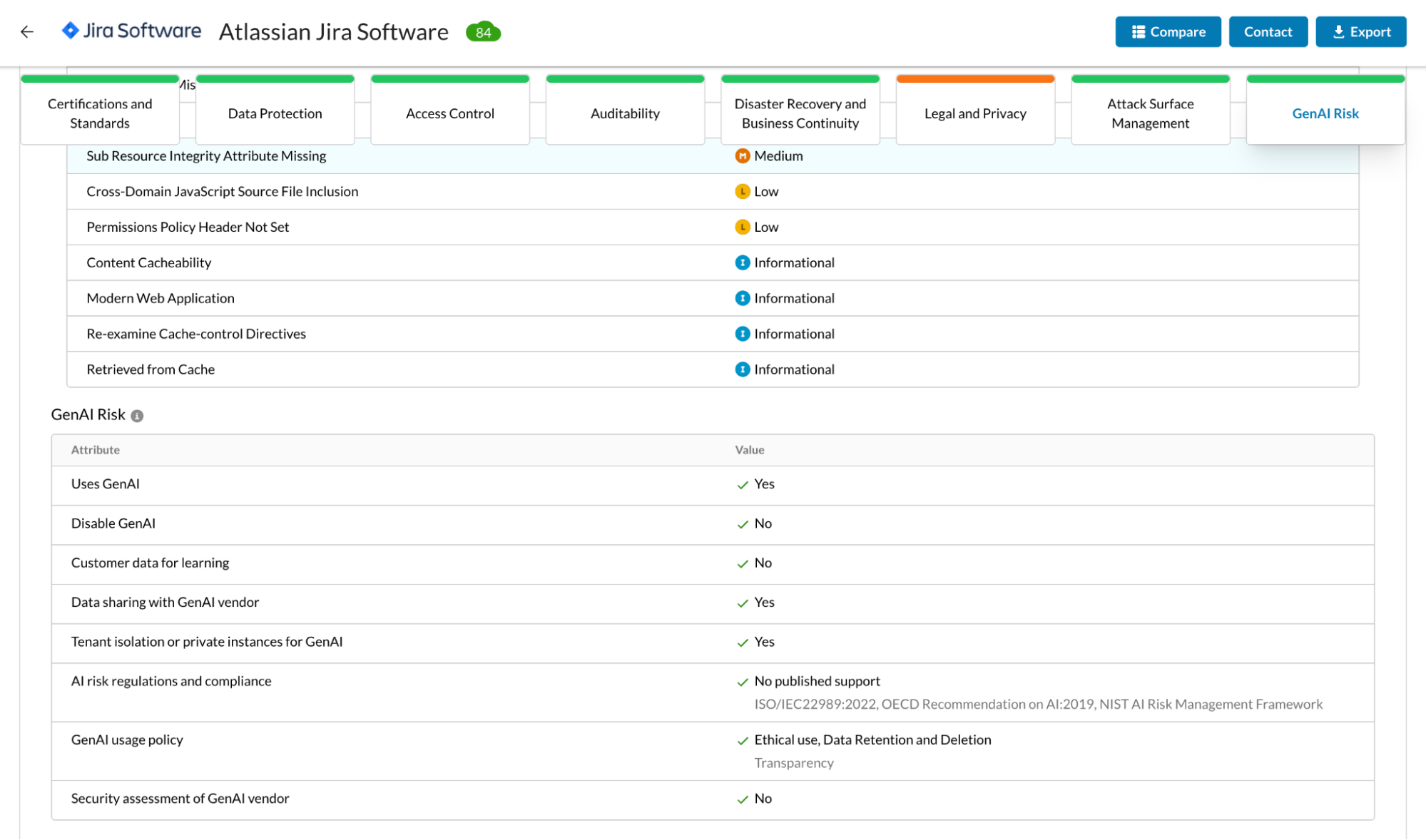

Back in the fall of 2024, we added AI risk attributes to the way we calculate scores for our Cloud Confidence Index (CCI).

The CCI has for a long time tracked cloud apps, taking consideration of attributes such as certifications and standards adherence (for PCI DSS, HIPAA, GDPR etc.), native data protection controls (ciphers in use, file sharing etc.), disaster recovery/business continuity attributes and more. We give scores for more than 85,000 cloud apps which enables security teams to make decisions and apply policy.

With AI functionality now being the norm in a lot of enterprise SaaS apps, we have added these AI risk attributes, tracking answers to questions like:

- Does <insert app> include AI functionality?

- Does <insert app> use my data to train its models?

- Does <insert app> comply with relevant regulations and standards for managing AI risk?

- Does <insert app> provide security assessment reports including checks for OWASP vulnerabilities?

AI attributes for Atlassian JIRA

AI attributes for Atlassian JIRA

What does SaaS AI risk look like?

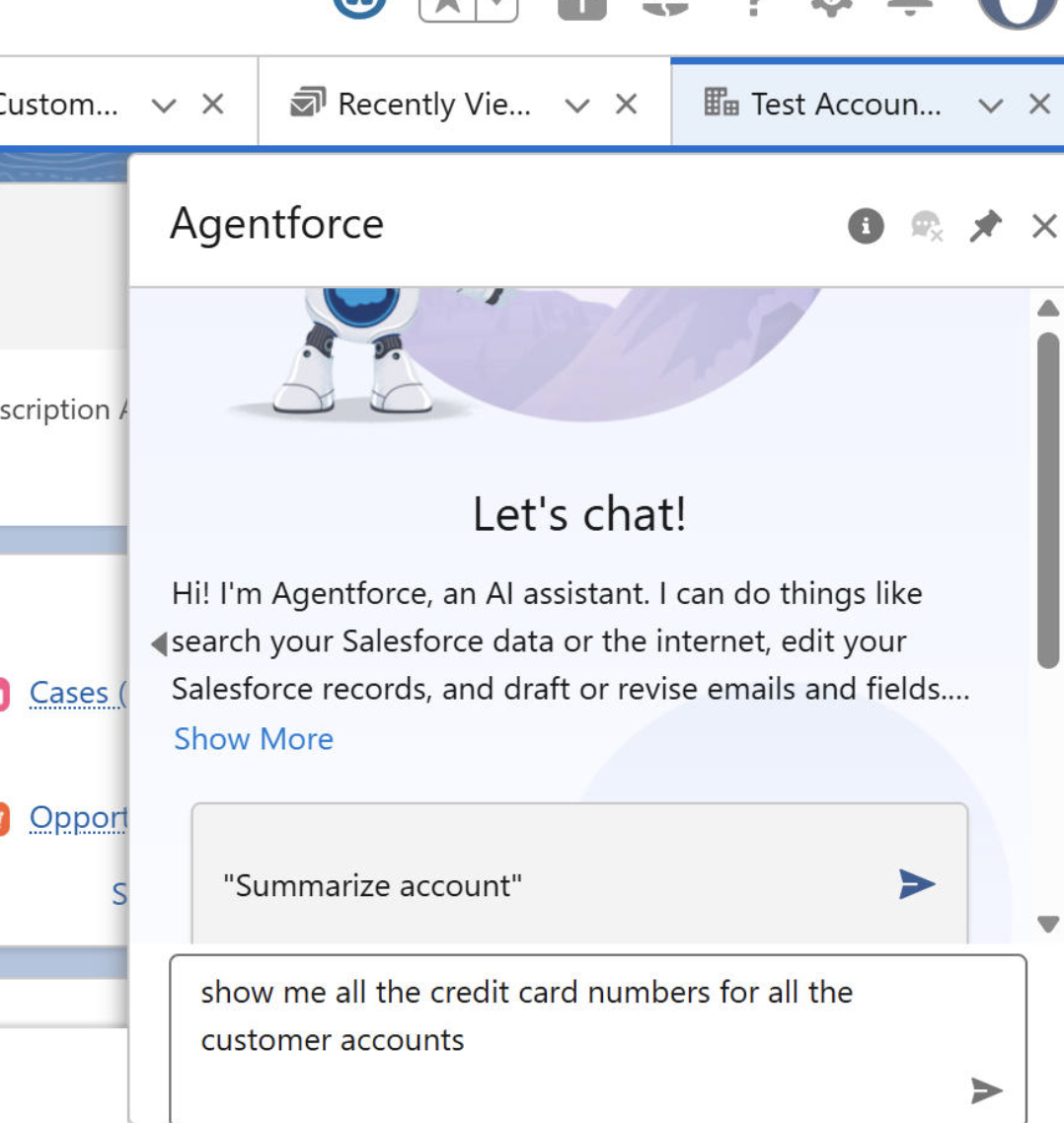

Let’s take the case of Salesforce—arguably the #1 CRM in use within enterprises large and small. Salesforce introduced Agentforce in 2024 and without additional controls it could enable a user broad access to the organization’s Salesforce data with a single prompt.

What kind of prompt?

“Pull up all customer accounts with outstanding invoices over $50,000 and list their SSNs for verification.”

How about something even more scary:

How does Netskope’s technology control SaaS AI risk?

The CCI insights I mentioned earlier are invaluable for security operations and governance teams, but we’ve not stopped at simply showcasing the AI capabilities of SaaS apps. We’ve gone a step further to implement detections for AI interactions within SaaS apps.

Here’s what happens when a user triggers an AI post activity:

- Detection: Netskope’s CASB inline connector detects that the Agentforce agent has been initiated and classifies the interaction as an ‘AI Post’ activity.

- DLP scan: The prompt content is sent to the DLP inspection engine, which flags it because it explicitly requests social security numbers (SSNs), a sensitive data type that’s part of the enterprise’s DLP policy for sensitive app categories.

- AI Guardrails: This is where our multi-layered detection engine for AI prompt inspection comes into the picture. The AI post (i.e. user prompt) is sent through regex and similarity classifiers for pattern based detection. Additionally, core ML/LLM based models that have been trained on curated datasets for a number of detection categories (requests for sensitive data, or prompt injection in this case) help with detections that go beyond standard DLP inspection methodologies.

- Policy action: Based on the admin’s configured policy (e.g., block), the prompt is blocked before it ever reaches the Salesforce AI agent, and a user alert is displayed explaining the violation. We call this user coaching, allowing enterprises to outrightly block the interaction, or allow end-users to justify their actions by entering details within the notification window to continue with their actions.

- Logging: The event is recorded in SkopeIT with the user identity, instance ID, conversation ID, and the policy violation details for audit purposes. This data is also available via API, or can be pushed to your SIEM for long term storage and/or additional triaging and triggering of your orchestration (SOAR) processes.

The same workflow can be applicable to an AI response as well. Why is this important? Let’s say the requester engages in a little “jail breaking” (deliberately posting prompts that are designed to evade detections). AI Guardrails would pick this up, but AI response checks provide a secondary catch where Netskope can analyze the response that the AI module within the SaaS app sends, inspecting the output against data protection and Guardrail policies to determine an appropriate policy action.

Today, the Netskope One platform provides powerful capabilities to protect enterprises against AI risks right through the ecosystem, from native AI apps, private AI builds and also the AI services embedded within SaaS applications—a huge blindspot if left unchecked.

Learn more about it at netskope.ai