Su red del mañana

Planifique su camino hacia una red más rápida, más segura y más resistente diseñada para las aplicaciones y los usuarios a los que da soporte.

Ponte manos a la obra con la plataforma Netskope

Esta es su oportunidad de experimentar de primera mano la Netskope One plataforma de una sola nube. Regístrese para participar en laboratorios prácticos a su propio ritmo, únase a nosotros para una demostración mensual del producto en vivo, realice una prueba de manejo gratuita de Netskope Private Accesso únase a nosotros para talleres en vivo dirigidos por instructores.

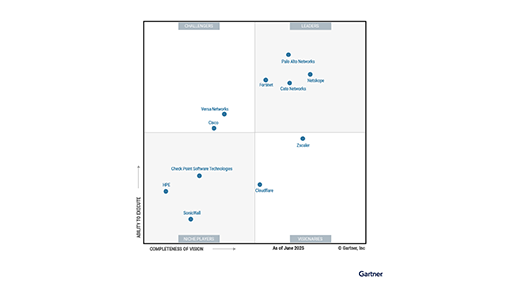

Netskope ha sido reconocido como Líder con mayor visión tanto en plataformas SSE como SASE

2X líder en el Cuadrante Mágico de Gartner® para SASE Plataforma

Una plataforma unificada creada para tu viaje

Una plataforma unificada creada para tu viaje

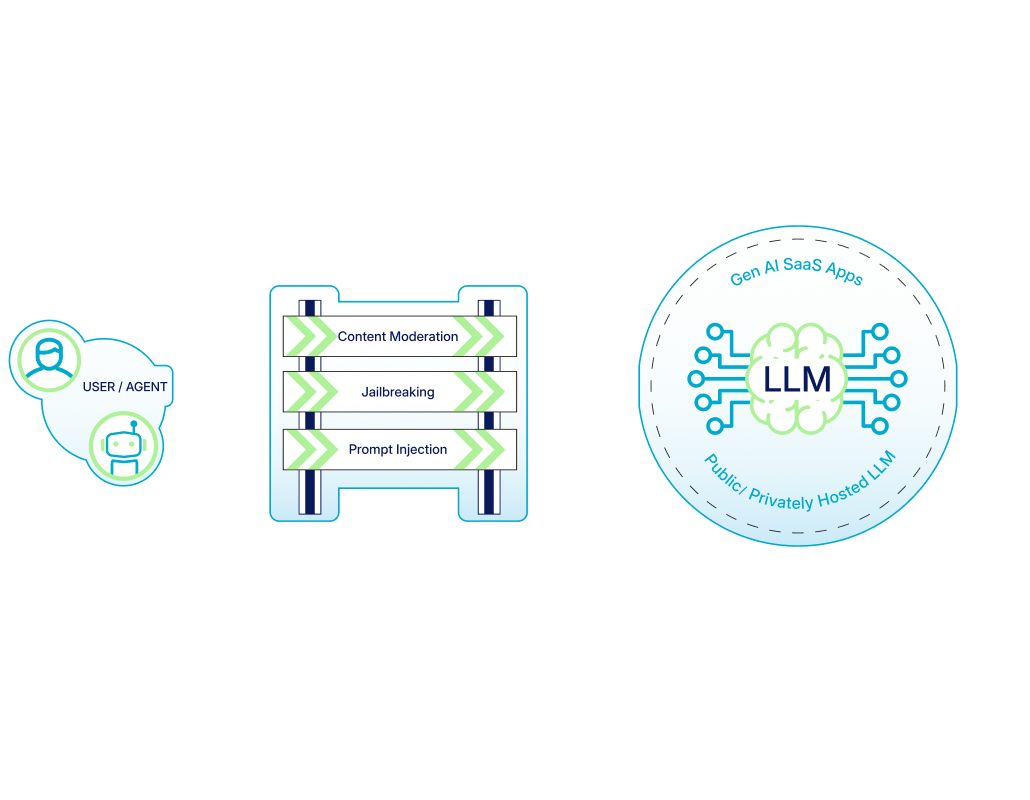

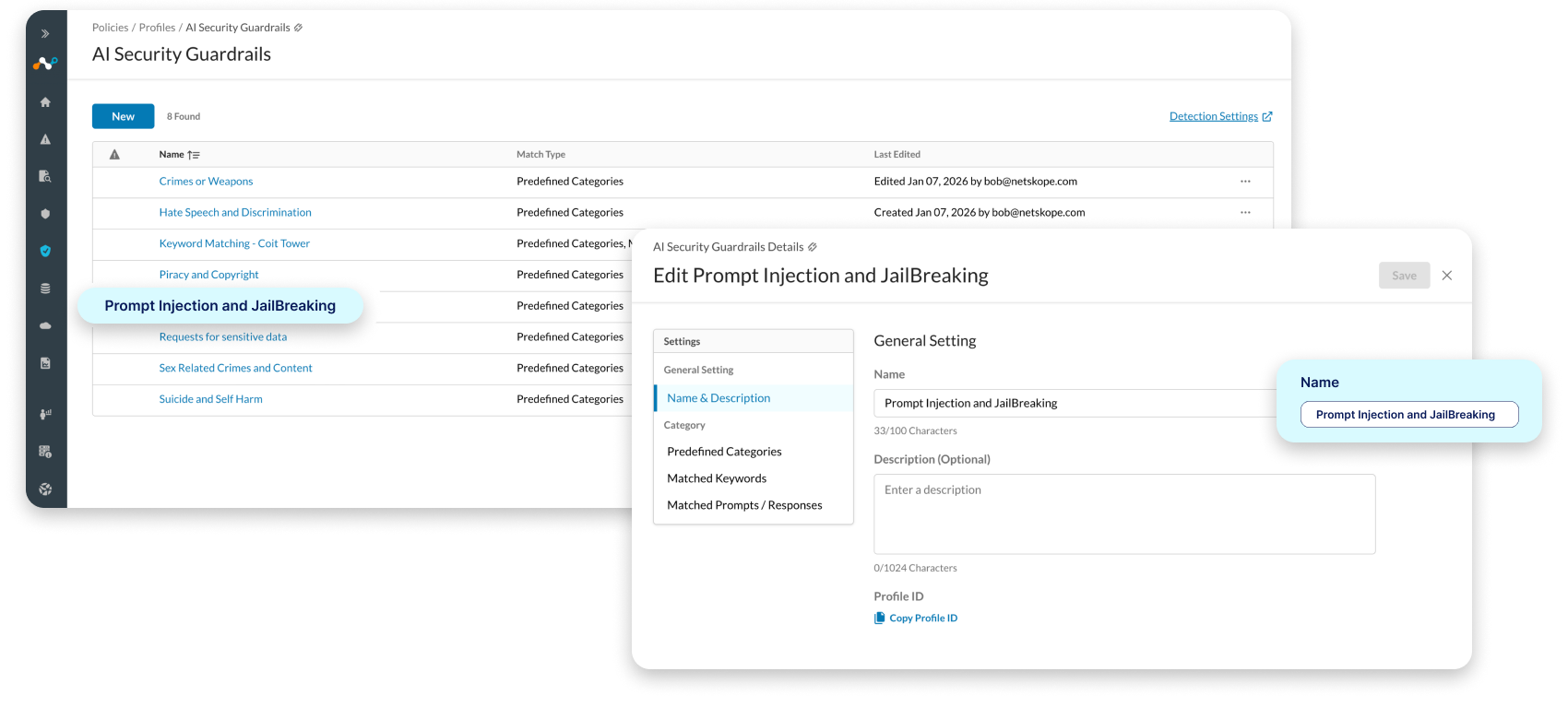

Netskope One AI Security

Organizations need secure AI to move their business forward, but controls and guardrails must not require sacrifices in speed or user experience. Netskope can help you say yes to the AI advantage.

Netskope One AI Security

Organizations need secure AI to move their business forward, but controls and guardrails must not require sacrifices in speed or user experience. Netskope can help you say yes to the AI advantage.

Prevención moderna de pérdida de datos (DLP) para Dummies

Obtenga consejos y trucos para la transición a una DLP entregada en la nube.

SD-WAN moderna para maniquíes SASE

Deje de ponerse al día con su arquitectura de red

Entendiendo dónde está el riesgo

Advanced Analytics transforma la forma en que los equipos de operaciones de seguridad aplican los conocimientos basados en datos para implementar una mejor política. Con Advanced Analytics, puede identificar tendencias, concentrarse en las áreas de preocupación y usar los datos para tomar medidas.

6 razones por las que Universal ZTNA es un escape inteligente del caos de VPN y NAC

Olvida la complejidad de las VPN y el NAC. Descubre cómo Universal ZTNA protege a cada usuario y a cada Dispositivo con un único marco coherente.

Colgate-Palmolive Salvaguarda su "Propiedad Intelectual" con Protección de Datos Inteligente y Adaptable

Netskope logra la alta autorización FedRAMP

Elija Netskope GovCloud para acelerar la transformación de su agencia.

Soporte técnico Netskope

Nuestros ingenieros de soporte cualificados ubicados en todo el mundo y con distintos ámbitos de conocimiento sobre seguridad en la nube, redes, virtualización, entrega de contenidos y desarrollo de software, garantizan una asistencia técnica de calidad en todo momento

Netskope Training

La formación de Netskope le ayudará a convertirse en un experto en seguridad en la nube. Estamos aquí para ayudarle a proteger su proceso de transformación digital y aprovechar al máximo sus aplicaciones cloud, web y privadas.

Maximiza tu ROI de SASE con Netskope Business Value Services

Start proving ROI. Netskope BVS is a complimentary consulting service that quantifies the financial and strategic impact of your SASE transformation.

Hagamos grandes cosas juntos

La estrategia de venta centrada en el partner de Netskope permite a nuestros canales maximizar su expansión y rentabilidad y, al mismo tiempo, transformar la seguridad de su empresa.