O AI Security Playbook

Este manual explora os seis principais desafios de segurança que as organizações enfrentam ao adotar a IA, juntamente com estratégias comprovadas e reais para enfrentá-los.

Coloque a mão na massa com a plataforma Netskope

Esta é a sua chance de experimentar a plataforma de nuvem única do Netskope One em primeira mão. Inscreva-se em laboratórios práticos e individualizados, junte-se a nós para demonstrações mensais de produtos ao vivo, faça um test drive gratuito do Netskope Private Access ou participe de workshops ao vivo conduzidos por instrutores.

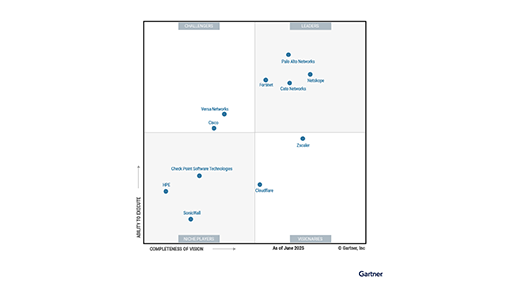

A Netskope é reconhecida como a líder mais avançada em visão para as plataformas SSE e SASE

2X é líder no Quadrante Mágico do Gartner® para plataformas SASE

Uma plataforma unificada criada para sua jornada

Uma plataforma unificada criada para sua jornada

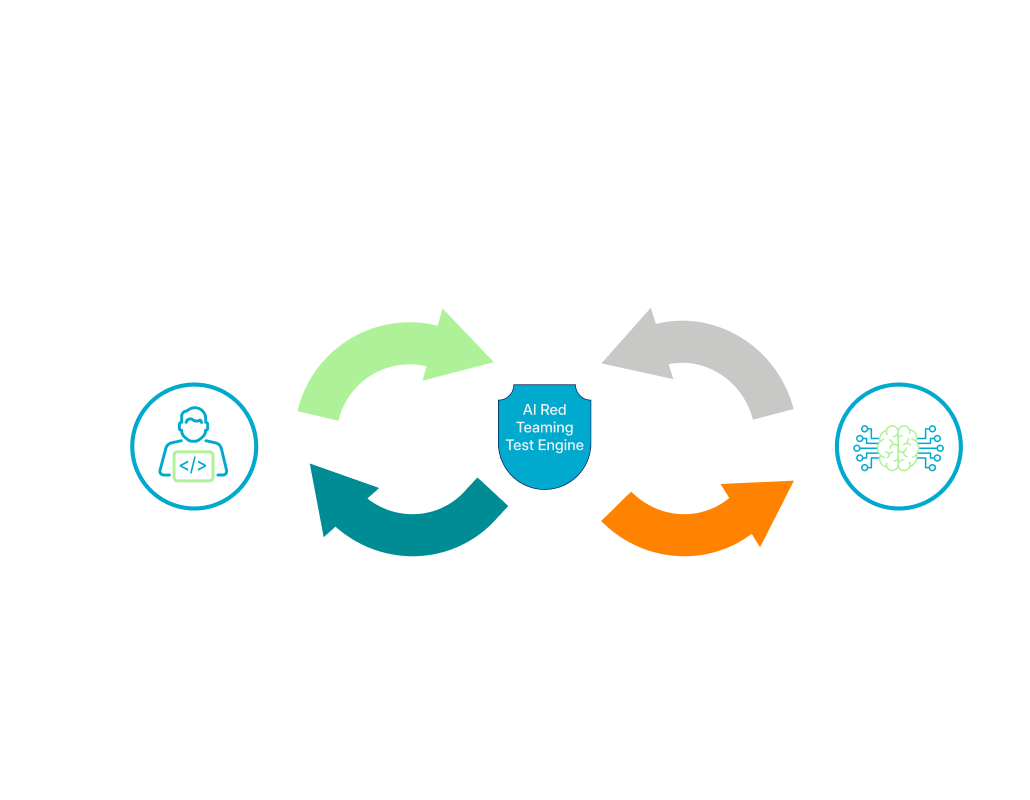

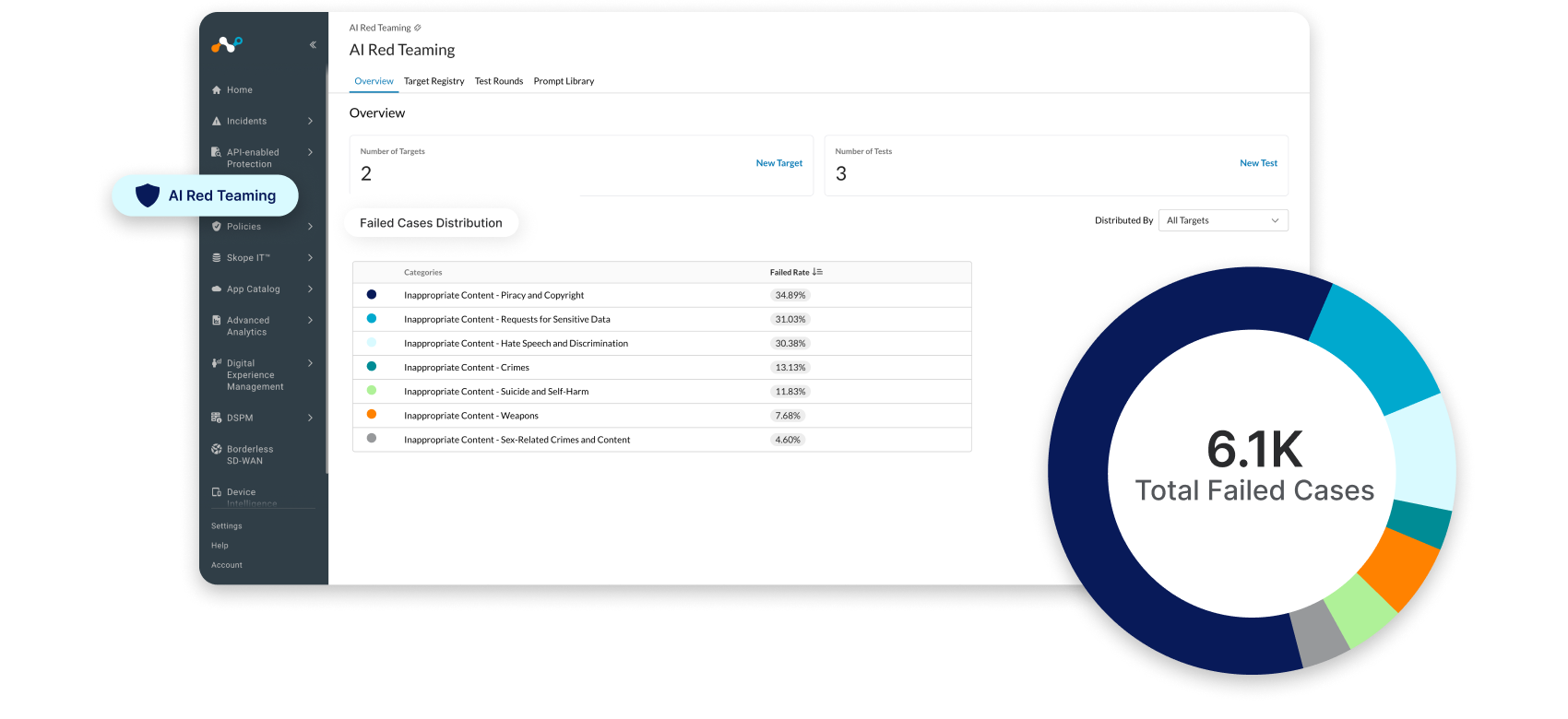

Netskope One AI Security

As organizações precisam de IA segura para impulsionar seus negócios, mas os controles e as salvaguardas não devem comprometer a velocidade ou a experiência do usuário. A Netskope pode te ajudar a dizer sim às vantagens da IA.

Netskope One AI Security

As organizações precisam de IA segura para impulsionar seus negócios, mas os controles e as salvaguardas não devem comprometer a velocidade ou a experiência do usuário. A Netskope pode te ajudar a dizer sim às vantagens da IA.

Prevenção Contra Perda de Dados (DLP) Moderna para Leigos

Obtenha dicas e truques para fazer a transição para um DLP fornecido na nuvem.

Compreendendo onde estão os riscos

O Advanced Analytics transforma a maneira como as equipes de operações de segurança aplicam insights orientados por dados para implementar políticas melhores. Com o Advanced Analytics, o senhor pode identificar tendências, concentrar-se em áreas de preocupação e usar os dados para tomar medidas.

6 motivos pelos quais o Universal ZTNA é uma solução inteligente para escapar do caos das VPNs e do NAC

Abandone a complexidade das VPNs e do NAC. Saiba como o Universal ZTNA protege todos os usuários e dispositivos com uma estrutura consistente.

A BDO une redes e segurança para proteger uma infraestrutura com foco em nuvem e compatível com IA.

Netskope obtém alta autorização do FedRAMP

Escolha o Netskope GovCloud para acelerar a transformação de sua agência.

The Lens

Read about the latest news and opinions from the team at Netskope. The Lens combines our blogs, our podcasts and case studies, with new content added every week.

Suporte Técnico Netskope

Nossos engenheiros de suporte qualificados estão localizados em todo o mundo e têm diversas experiências em segurança de nuvem, rede, virtualização, fornecimento de conteúdo e desenvolvimento de software, garantindo assistência técnica de qualidade e em tempo hábil.

Inteligência Artificial na Pista Rápida

O roadshow AI in the Fast Lane da Netskope reúne profissionais de segurança para discutir como as organizações estão usando IA atualmente e como uma estratégia de segurança abrangente pode criar um modelo mais inteligente, seguro e preparado para o futuro.

Treinamento Netskope

Os treinamentos da Netskope vão ajudar você a ser um especialista em segurança na nuvem. Conte conosco para ajudá-lo a proteger a sua jornada de transformação digital e aproveitar ao máximo as suas aplicações na nuvem, na web e privadas.

Maximize o retorno do seu investimento em envelopes autoendereçados e selados (SASE) com os serviços de valor agregado da Netskope.

Comece a comprovar o retorno sobre o investimento (ROI). A Netskope BVS é um serviço de consultoria gratuito que quantifica o impacto financeiro e estratégico da sua transformação SASE.

Vamos fazer grandes coisas juntos

A estratégia de comercialização da Netskope, focada em Parcerias, permite que nossos Parceiros maximizem seu crescimento e lucratividade enquanto transformam a segurança corporativa.