ChatGPT use is increasing exponentially among enterprise users, who are using it to help with the writing process, to explore new topics, and to write code. But, users need to be careful about what information they submit to ChatGPT, because ChatGPT does not guarantee data security or confidentiality. Users should avoid submitting any sensitive information, including proprietary source code, passwords and keys, intellectual property, or regulated data. This blog post explores how well enterprise users are following this guidance.

Users posting sensitive data to ChatGPT

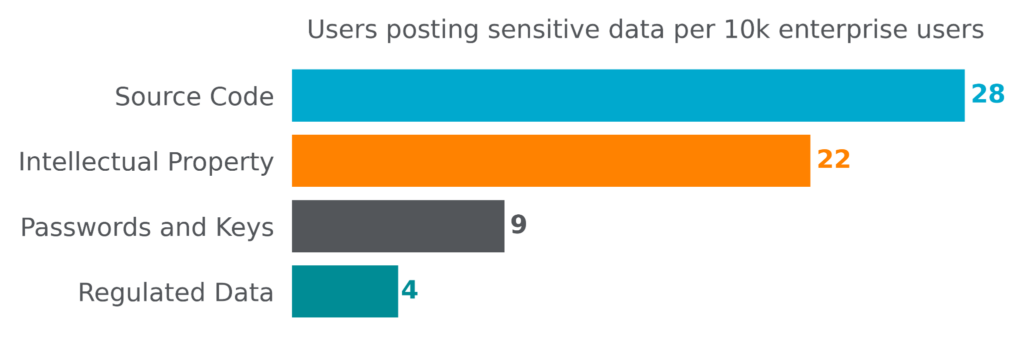

For the four-week period starting May 8, 2023 through June 4, 2023, Netskope Threat Labs tracked four different types of sensitive data posted to ChatGPT. Source code (mostly Python, Javascript, and Java) led the pack with 28 out of every 10k users submitting source code to ChatGPT. Intellectual property (as defined by organization DLP policies) came in second, with 22 out of every 10k users posting intellectual property. Passwords and keys came in third, followed by regulated data, mostly personally identifiable information (PII), financial data, and healthcare data.

Frequency of sensitive data posted to ChatGPT

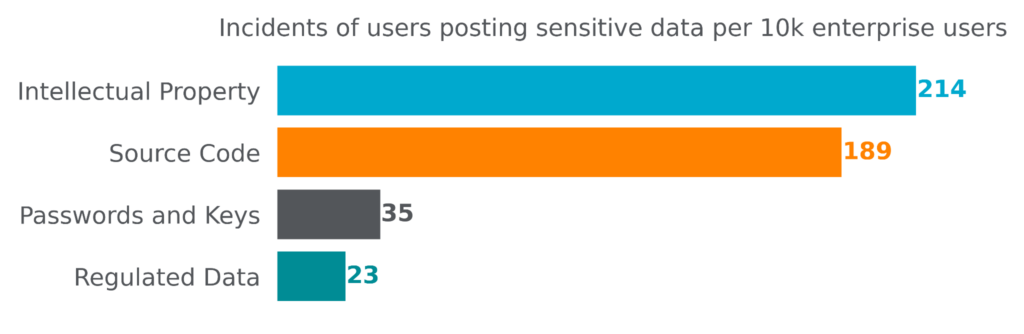

For the same four-week period starting on May 8, 2023 through June 4, 2023, there were 461 incidents of sensitive data posted to ChatGPT per 10k enterprise users. Source code and intellectual property were posted most frequently, each with hundreds of posts per 10k enterprise users, while passwords and keys and regulated data were posted much less frequently, with only tens of posts per 10k enterprise users.

Safely enabling ChatGPT

Netskope customers can safely enable the use of ChatGPT with application access control, real-time user coaching, and data protection.

About this blog post

Netskope provides threat and data protection to millions of users worldwide. Information presented in this blog post is based on anonymized usage data collected by the Netskope Security Cloud platform relating to a subset of Netskope customers with prior authorization. Stats are based on the period starting on May 8, 2023 through June 4, 2023. Stats are a reflection of both user behavior and organization policy.

The stats presented in this blog post are based on more than 300,000 users in organizations that have implemented DLP policies to monitor and control what content users are posting to ChatGPT.