Cybersecurity has a bad rap for getting in the way of business. Many CIOs & CISOs dedicate a lot of time to minimizing security solutions’ performance drag on their network traffic while ensuring that the solutions continue to do their job keeping the network secure. The move to the cloud exacerbates this challenge.

A few years ago, a security team would install security services on a series of physical appliances. Firewall, URL filtering, email monitoring, threat scanning, and data loss prevention (DLP) functions, for example, might each run on their own box. The five appliances might be configured serially, such that a data packet would flow into one, the appliance would perform its standard service, then the packet would move on to the next appliance, which again would go through all its standard steps. The scalability of each service would be limited by the space available on its physical appliance. And when the hardware was maxed out, performance of the security checks—and by extension, performance of network traffic—would slow down. These challenges only became exacerbated with encrypted traffic flows and the need to decrypt, scan, and then re-encrypt traffic multiple times, for each function.

Many customers attempted to improve scalability by shifting to virtual appliances, only to run into the same “bottlenecking” issue. Whether a solution is running in the cloud or on-premises, virtualization requires administrators to assign specific resources, including CPU, memory, and disk space. Some security platforms consolidate a range of different services. This gives the suite of solutions access to more resources in aggregate, but the services have to compete for that finite quantity of all available resources, and ultimately performance is not optimized for any of them. Inherent to the design, this resource “tug of war” ultimately forces trade-offs between security processing and performance.

Whatever the approach, physical, virtual, or cloud-based approaches typically only have so much room to scale horizontally. After that point, resource limitations introduce latency to the performance of the solutions they house. A security infrastructure operating through a traffic pipeline with a fixed diameter is eventually going to hit those limitations and bottlenecks, and the speed of the network will suffer and ultimately this translates into a degraded user experience, and in the worst possible case, the risk of users bypassing security controls altogether which exposes organizations to risk.

Loosely coupled but independent microservices

As Netskope developed what is now our secure access service edge (SASE)-ready platform, we designed the architecture with the goal of overcoming latency that degrades the performance of traditional security solutions. To reach that goal, we rethought two aspects of how security technology fundamentally operates.

First, we consolidated key security capabilities into a single unified platform, while simultaneously abstracting out individual security functions into what we call at Netskope “microservices.” Processes such as data loss prevention (DLP), threat protection, web content filtering, and Zero Trust Network Access (ZTNA) run independently, each with its own resources. When resource limitations begin impacting the performance of one of the microservices, the Netskope Security Cloud is designed to automatically scale up (or out) that microservice by independently releasing the required resources.

For example, SSL interception is most likely to be limited by system input-output (I/O), trying to decrypt traffic it receives off the network. While TLS/SSL session setup is well-understood to be bound by the central processing unit (CPU) for the asymmetric key operations, once a session is established the symmetric encryption and decryption functions are no longer CPU-bound since most modern-day CPUs have AES instructions natively built-in. Accordingly, during the actual data transfer phase, the bottleneck quickly becomes how quickly packets can get in and out of the system (I/O, not CPU), with every packet copy adding overhead that increases latency of overall packet processing. On the other hand, DLP tends to be more bound by the CPU because its purpose is to crack open suspicious files using processor-intensive technologies such as various regular expression engines. If DLP performance were to become constrained by CPU limitations, Netskope’s design would quickly increase processor power specifically for that DLP microservice, rather than ramping up CPU power across the board and for all security services to compete over.

This may sound a bit like the olden days, in which each security solution ran on its own hardware, but it’s not. It’s a dramatic simplification and abstraction through the myriad of Netskope microservices. This leads to the second noteworthy aspect of the Netskope architecture which is how the individual microservices are independent, yet remain tightly coupled. Although they independently utilize resources, such as I/O or CPU, they share the results of certain processes so that the same workloads are not unnecessarily repeated across multiple microservices analyzing the same packets. This delivers significant efficiencies for how Netskope is able to process large volumes of traffic, better tie together the “context” of security results, and ultimately speed performance and drive down latency.

Faster traffic processing and more effective security

Any security product or service is going to introduce some latency. That’s a fact. Every solution that touches a data packet that’s in motion will, based on the laws of physics, get slowed down; however, Netskope’s single-pass architecture is designed to minimize end-to-end latency. It accomplishes this by separating the “content” from the metadata, and by performing repetitive activities just once to better leverage the results across every microservice that utilizes those activities. I won’t cover this in detail in this blog, but the optimizations of the Netskope security private cloud, called NewEdge, further reduce latency and optimize for the best possible user and application experience. This includes decisions made on the integrated racks we build for deployment in our data centers, on controlling all traffic routing and data center locations, peering extensively with web, cloud, and SaaS providers (in every data center), as well as massively over-provisioning each data center and running the infrastructure with low utilization (and maximum headroom) to accommodate unusual traffic spikes or customer adoption.

Getting back to the topic of repetitive activities performed inside the Netskope Security Cloud, let’s consider “decryption” as an example. Around 90% of the traffic that Netskope handles today is encrypted. Although our security microservices will perform different operations on the traffic once it’s been decrypted, they all require that the packet be decrypted first before being able to perform their specialized action or operation. In this case, our single-pass architecture abstracts the higher-level microservices from the decryption process, so Netskope decrypts traffic only once, then applies the multiple, diverse and policy-appropriate microservices on the traffic, before re-encrypting and sending the traffic on its way.

To drill into this further, the traffic decryption process itself results in both usable content and metadata that describes the packets being intercepted. When a Netskope microservice—such as DLP or threat protection—subsequently encounters that traffic, it has immediate access to information about who the user is, what application they are accessing, what activity they are attempting to perform, and where the associated content is in the packet stream. If the microservice needs to inspect the packet’s content, it can do so much more quickly than if it were encountering encrypted communications for the first time.

In addition to the decryption scenario, security “policy” is another area in which common workloads can be performed once and then shared across and leveraged by multiple microservices. All Netskope microservices use the same policy engine and policy lookups can be reused across services. This means security definitions are consistent across all the different Netskope Security Cloud services. Accordingly, CISOs and their security practitioners don’t have to separately define for example General Data Protection Regulation (GDPR) or Payment Card Industry (PCI) policy for email versus endpoint vs web or SaaS security. This unification and simplification of policy, not just through a single administrative console that Netskope customers really appreciate, but also at a lower microservices level which further improves overall system performance.

This approach also saves on multiple services repeating the same actions. For example, several security processes might require the identity of the user who initiated a specific web request (with a corresponding network packet) to be matched against a slate of user profiles. This information might be valuable for defining the policy actions on this user’s traffic, for example. After this lookup is completed and the user identified, then this information can be easily shared with the rest of the Netskope microservices. The DLP service might use that information in determining how data gets classified, for example, is it sensitive or not. While the threat protection service could refer to this user context in malware inspection decisions, for example, is this a known risky user. In either case, once the identity is determined, neither microservice would need to repeat this action.

Ultimately by reusing high-level operations in this way (e.g decryption, policy, user identification), the single-pass architecture streamlines packet processing significantly and reduces microservices’ end-to-end latency. The effect can be substantial. With DLP, for instance, these sorts of activities may constitute 20% of the total time (and resources) that this microservice consumes. The Netskope architecture’s abstraction of microservices, while at the same time loosely coupling these services together, optimizes traffic processing to and from the cloud and minimizes the impact of security on end-user experience.

Consistency of policy and visibility at the executive level

The Netskope single-pass architecture also enables security events generated across Netskope’s Next-gen Secure Web Gateway (SWG), Cloud Access Security Broker (CASB), DLP, and other service offerings to be visible through a single incident management and administration dashboard. From the CISO’s perspective, all the Netskope microservices appear to function as one integrated solution, managed through one console. This allows security teams to respond faster, be aware of security incidents sooner, roll out new or updated policies more seamlessly, and successfully deliver on the security mission.

The various Netskope Security Cloud services (e.g. NG-SWG, CASB, DLP) also use the same data lake on the back end and produce normalized outputs that describe security events in standard terms. It also unlocks advanced insights for customers – like identifying anomalous behavior or flagging risky users – by using Netskope’s machine learning-based user entity behavior analytics (UEBA) that includes user confidence scoring and intelligent event correlation based on data collected. This makes it easier for the security team to recognize issues and reduces the effort required to pull data from the different microservices into the corporate security information and event management (SIEM) system. Security professionals spend less time on manual data cleansing and more time responding to the events different Netskope microservices identify. This is dramatically easier and faster than legacy approaches with multiple products, consoles, different data and formats, and so on.

Ultimately, the Netskope single-pass architecture is appealing both to the security analysts and practitioners living in the weeds of trying to protect the enterprise and their most valuable digital assets, as well as the networkers that are trying to minimize the latency and overall impact on the network. Plus, this single-pass approach gives senior leaders and executives, including the C-suite, the “big picture” view of the organization’s infrastructure status and security posture through powerful and insightful dashboard views.

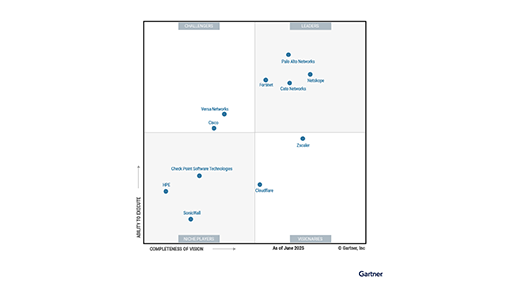

As the SASE leader, Netskope offers holistic cloud security and data protection that – through its unique single-pass architecture – simultaneously optimizes the efficacy and efficiency of security services, while delivering superior performance. It’s a big step forward for networking and security leaders looking to support their organization’s move to the cloud and digital transformation. And it’s just another example of how Netskope is executing on its mission of delivering world-class security without trade-offs.

Back

Back

Read the blog

Read the blog