The AI Security Playbook

This playbook explores six core security challenges organizations face when adopting AI, along with proven, real-world strategies to address them.

Get Hands-on With the Netskope Platform

Here's your chance to experience the Netskope One single-cloud platform first-hand. Sign up for self-paced, hands-on labs, join us for monthly live product demos, take a free test drive of Netskope Private Access, or join us for a live, instructor-led workshops.

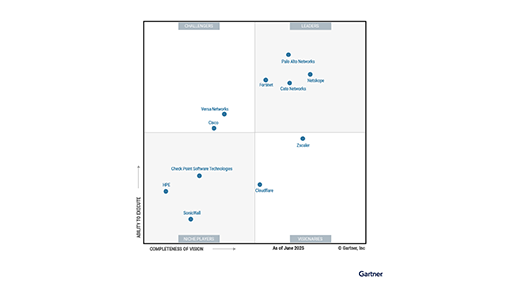

Netskope is recognized as a Leader Furthest in Vision for both SSE and SASE Platforms

2X a Leader in the Gartner® Magic Quadrant for SASE Platforms

One unified platform built for your journey

One unified platform built for your journey

Netskope One AI Security

Organizations need secure AI to move their business forward, but controls and guardrails must not require sacrifices in speed or user experience. Netskope can help you say yes to the AI advantage.

Netskope One AI Security

Organizations need secure AI to move their business forward, but controls and guardrails must not require sacrifices in speed or user experience. Netskope can help you say yes to the AI advantage.

Modern Data Loss Prevention (DLP) for Dummies

Get tips and tricks for transitioning to a cloud-delivered DLP.

Modern SD-WAN for SASE Dummies

Stop playing catch up with your networking architecture

Understanding where the risk lies

Advanced Analytics transforms the way security operations teams apply data-driven insights to implement better policies. With Advanced Analytics, you can identify trends, zero in on areas of concern and use the data to take action.

6 Reasons Universal ZTNA is a Smart Escape from VPN and NAC Chaos

Ditch the complexity of VPNs and NAC. Learn how Universal ZTNA secures every user and device with one consistent framework.

BDO Brings Together Networking and Security to Protect a Cloud-First, AI-Friendly Infrastructure

Netskope achieves FedRAMP High Authorization

Choose Netskope GovCloud to accelerate your agency’s transformation.

The Lens

Read about the latest news and opinions from the team at Netskope. The Lens combines our blogs, our podcasts and case studies, with new content added every week.

Netskope Technical Support

Our qualified support engineers are located worldwide and have diverse backgrounds in cloud security, networking, virtualization, content delivery, and software development, ensuring timely and quality technical assistance

AI in the Fast Lane

Netskope’s AI in the Fast Lane roadshow brings together security professionals to discuss how organizations are using AI today, and how a comprehensive security strategy can create a smarter, safer, and future-proof model.

Netskope Training

Netskope training will help you become a cloud security expert. We are here to help you secure your digital transformation journey and make the most of your cloud, web, and private applications.

Maximize your SASE ROI with Netskope Business Value Services

Start proving ROI. Netskope BVS is a complimentary consulting service that quantifies the financial and strategic impact of your SASE transformation.

Let's do great things together

Netskope’s partner-centric go-to-market strategy enables our partners to maximize their growth and profitability while transforming enterprise security.

Back

Back

)